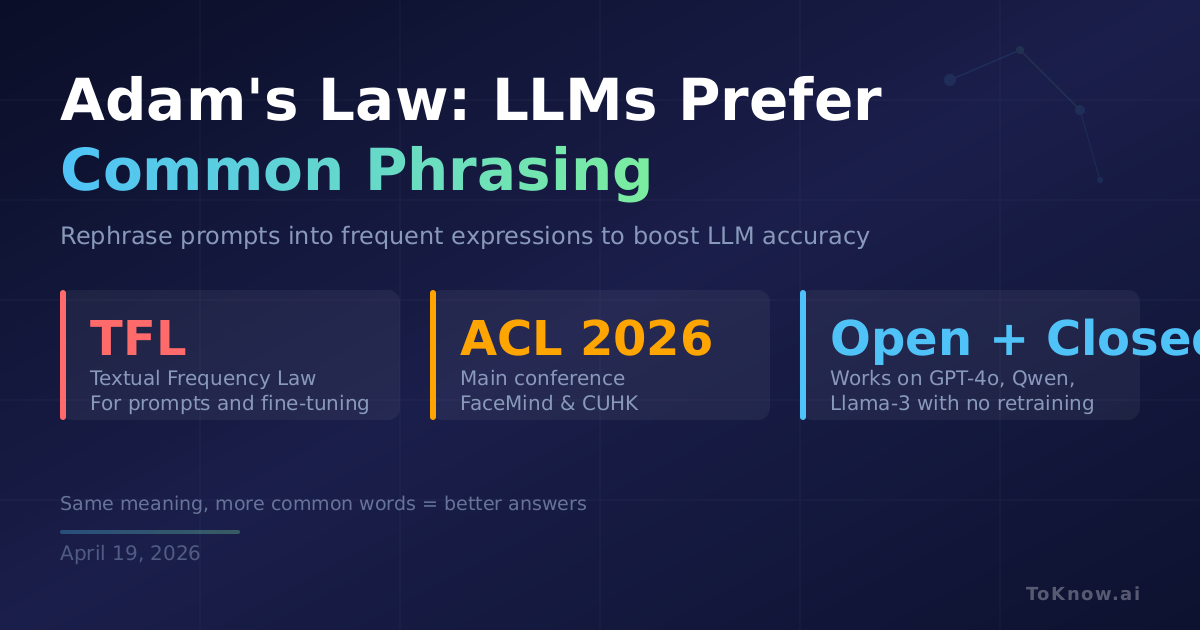

Researchers at FaceMind and the Chinese University of Hong Kong have formalized something prompt engineers have long suspected: LLMs are better at understanding sentences phrased the way most people on the internet phrase them. Their paper, Adam’s Law, proposes a Textual Frequency Law (TFL) stating that frequently occurring textual expressions are systematically easier for LLMs to handle, both at prompting time and during fine-tuning. The team built an input paraphraser that estimates sentence-level frequency using web resources, then rewrites prompts into more common phrasings without changing meaning. The same principle drives a fine-tuning data filter that prefers high-frequency expressions. Across reasoning, code, and instruction-following benchmarks, applying TFL improved performance for both closed-source models like GPT-4o and open-weight models like Qwen2.5 and Llama-3, with no architectural changes.

The practical takeaway is concrete. If your carefully worded prompt underperforms, the problem may not be the model: it may be that your phrasing is rare in the training data. Running the same intent through a paraphraser that biases toward common expressions can recover lost accuracy without prompt rewrites by hand. For teams fine-tuning open models, filtering training data by frequency offers a cheap quality lever before touching hyperparameters or scale.

This reframes prompt engineering as a frequency-matching problem rather than a rhetorical one. It also tightens an existing finding from a related study showing that prompt specificity, not length, drives code quality: specificity helps, but only if expressed in phrasing the model has seen often.

Sources:

Disclaimer: For information only. Accuracy or completeness not guaranteed. Illegal use prohibited. Not professional advice or solicitation. Read more: /terms-of-service

Reuse

Citation

@misc{kabui2026,

author = {{Kabui, Charles}},

title = {Adam’s {Law:} {LLMs} {Work} {Better} {When} {You} {Rephrase}

{Your} {Prompt} {Into} {Words} {They’ve} {Seen} {More} {Often}},

date = {2026-04-19},

url = {https://toknow.ai/posts/adams-law-textual-frequency-llm-paraphrasing-fine-tuning/},

langid = {en-GB}

}