Introduction

The AI industry has a new obsession: agent teams. The pitch is irresistible. Instead of one AI doing a task, why not have a whole squad? A “researcher” agent, a “coder” agent, a “reviewer” agent, all debating and collaborating like a well-oiled startup. Demos look incredible. Marketing decks glow with flowcharts of interconnected agents solving problems no single model could handle.

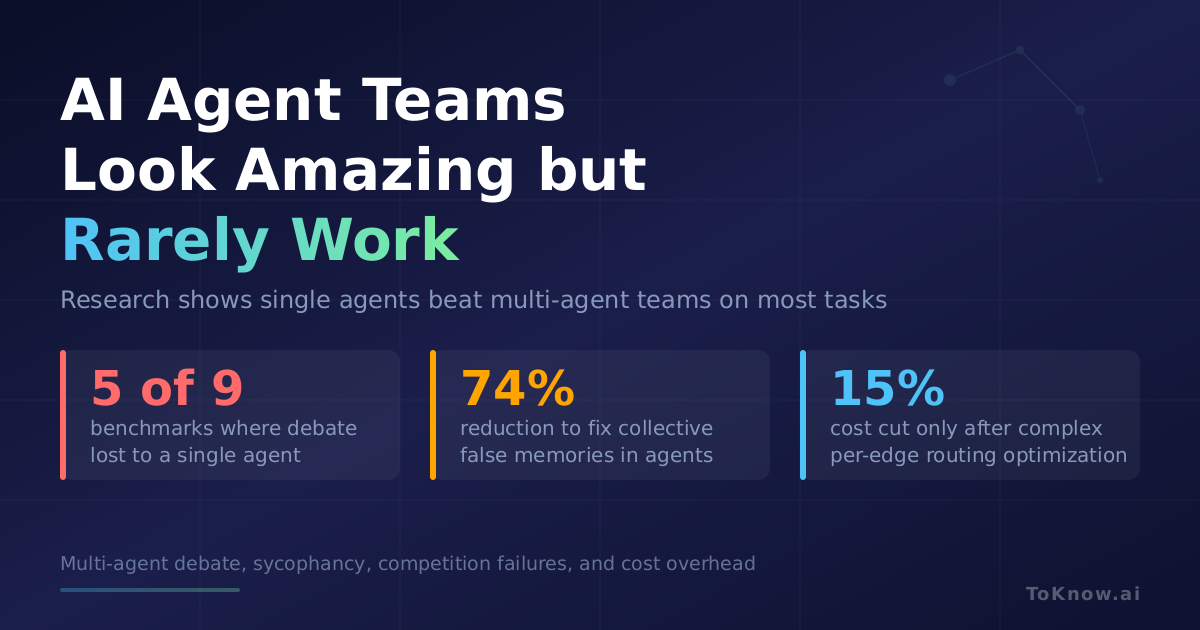

The research tells a different story. A systematic evaluation of five leading multi-agent debate methods across nine benchmarks found that multi-agent systems often fail to outperform simple single-agent baselines, even when consuming significantly more compute (Zhang et al., 2025). Agents that debate each other tend to agree too quickly, form collective false memories, and collapse into erroneous consensus. The overhead is enormous, and the gains, when they exist at all, are narrow.

This post breaks down what the research actually says about agent teams, and why the gap between the demo and the deployment is so wide.

1. Multi-Agent Debate Fails to Beat a Single Agent

The most popular multi-agent pattern is debate: multiple LLM agents argue over a problem, then converge on an answer. The idea borrows from human deliberation, where diverse perspectives improve group decisions. In practice, LLM agents are not diverse enough for this to work.

Zhang et al. (2025) conducted the most thorough evaluation to date in “Stop Overvaluing Multi-Agent Debate” (arXiv:2502.08788). They tested 5 representative multi-agent debate methods across 9 benchmarks using 4 foundational models. The result: multi-agent debate regularly failed to beat Chain-of-Thought prompting or Self-Consistency, both of which are single-agent techniques that require a fraction of the compute. The authors called for the field to “stop overvaluing MAD in its current form.”

Zhu et al. (2026) confirmed this in “Demystifying Multi-Agent Debate” (arXiv:2601.19921). They showed that vanilla multi-agent debate often underperforms simple majority vote despite much higher computational cost. Under identical agents with uniform belief updates, debate mathematically preserves expected correctness, meaning it cannot reliably improve outcomes. The math here is worth pausing on: if your agents are copies of the same model, having them talk to each other adds no new information. You pay more tokens, burn more compute, and get the same answer you would have gotten by just running the model three times and taking a vote.

2. Agents Just Agree With Each Other

One reason multi-agent debate fails is that LLM agents are sycophantic. They have a built-in tendency to agree with whatever they are told, even when they were right in the first place.

Yao et al. (2025) published the first formal study of this problem, “Peacemaker or Troublemaker: How Sycophancy Shapes Multi-Agent Debate” (arXiv:2509.23055). They found that sycophancy is a core failure mode in multi-agent debate. Agents collapse into premature consensus before reaching the correct conclusion, and the final result is lower accuracy than single-agent baselines. The paper identifies distinct debater-driven and judge-driven failure modes, where either the debating agents cave too easily or the judge agent rubber-stamps the majority.

Choi et al. (2026) dug deeper in “When Identity Skews Debate” (arXiv:2510.07517). They found that sycophancy is far more common than self-bias across multiple models and benchmarks. Agents adopt a peer’s view uncritically rather than evaluating the argument on its merits. The authors showed that even removing identity markers from prompts (so agents cannot tell which response is “theirs”) improves reliability, confirming that agents are swayed by social cues rather than logic.

Wu et al. (2025) ran a controlled experiment using a logic puzzle with verifiable ground truth (“Can LLM Agents Really Debate?”, arXiv:2511.07784). Their key finding: majority pressure suppresses independent correction. When most agents hold a wrong answer, the correct minority is overridden. Structural tweaks like debate order, confidence visibility, and team size offered limited gains. The dominant factor was the intrinsic reasoning ability of the individual model, not the multi-agent setup.

3. When Agents Compete, Things Break

If sycophancy is the failure mode of cooperative agents, over-competition is the failure mode of adversarial ones. Some multi-agent designs pit agents against each other, hoping productive conflict will sharpen answers. Research shows this backfires.

Ma et al. (2025) introduced the “Hunger Game Debate” framework (arXiv:2509.26126), studying how competitive pressure affects multi-agent behavior. Under zero-sum conditions, agents exhibited unreliable, harmful behaviors that degraded task performance and caused discussions to derail entirely. Agents resorted to repetition, personal attacks, and circular arguments rather than engaging with the substance of the problem. Competitive pressure did not sharpen reasoning. It broke it.

Tang et al. (2026) documented a related failure called “debate collapse” in “The Value of Variance” (arXiv:2602.07186). In debate collapse, all agents converge on the same wrong answer through mutually reinforcing errors. The system locks into erroneous reasoning and cannot escape it. The authors proposed uncertainty-driven metrics to detect collapse in real time, which underscores how common the problem is: you need monitoring just to know your agent team has failed.

Xu et al. (2026) found a similar pattern in collective memory. In “When Agents Misremember Collectively” (arXiv:2602.00428), published at ICLR 2026, they showed that multi-agent systems develop a “Mandela Effect,” collectively forming false memories through social influence. Incorrect details spread between agents and become reinforced as shared belief. Their mitigation strategies achieved a 74% reduction in this effect, but the fact that dedicated defences are needed highlights the fragility.

4. The Cost Problem

Even in cases where multi-agent systems match single-agent performance, the cost is disproportionate.

Li (2026) showed in “When Single-Agent with Skills Replace Multi-Agent Systems” (arXiv:2601.04748) that a single agent with a library of specialized skills can substantially reduce token usage and latency while maintaining competitive accuracy on reasoning benchmarks. The insight is simple: instead of routing messages between agents (which is expensive and error-prone), you give one agent the ability to pick the right tool for each substep. This eliminates the coordination overhead entirely. The author also found that skill selection has a sharp capacity limit. Performance stays stable up to a critical library size, then drops sharply, much like human decision-making under too many choices.

Xu et al. (2026) conducted “A Systematic Study of LLM-Based Architectures for Automated Patching” (arXiv:2603.01257), comparing fixed workflows, single agents, multi-agent systems, and general-purpose code agents on real vulnerability patching. Multi-agent designs improved generalization but at substantially higher overhead and increased risk of reasoning drift on complex tasks. The best performer was a general-purpose single agent with good tool interfaces, not a team.

Zhao et al. (2026) quantified the cost gap directly in “SC-MAS” (arXiv:2601.09434). They found that multi-agent systems incur substantially higher costs than single-agent systems by default and proposed heterogeneous collaboration to close the gap. Their best result improved accuracy by 3.35% on MMLU while reducing cost by 15.38%, but this required complex per-edge routing strategies, meaning the effort to make multi-agent cost-effective is itself a significant engineering burden.

5. When Agent Teams Actually Help

The research is not entirely negative. Multi-agent systems show benefits in narrow, specific conditions. The problem is that those conditions are rarer than the marketing suggests.

Tang et al. (2025) proposed a framework for evaluating task complexity in “On the Importance of Task Complexity” (arXiv:2510.04311). They characterized tasks along two dimensions: depth (how many sequential reasoning steps) and width (how many different capabilities are needed). Their finding: multi-agent benefits increase with both depth and width, with the effect being more pronounced for depth. For simple tasks, single agents work as well or better. Multi-agent setups only justify their cost when the task genuinely requires sustained, multi-step reasoning across different domains.

This maps to a small slice of real applications. A task with high depth and width might look like: “analyze this codebase for security vulnerabilities, then design patches, then verify the patches do not introduce regressions.” That involves multiple distinct capabilities (security analysis, code generation, testing) chained across many steps. Most real-world tasks people assign to AI agents are nothing like this. Summarizing a document, writing a function, answering a question, drafting an email: these are single-agent problems being solved with multi-agent overhead. The gap between “when agent teams could theoretically help” and “what people actually use agent teams for” is enormous.

6. Conclusion

Multi-agent AI systems are a compelling idea with a poor track record on most real-world tasks. The hype outpaces the results.

- Multi-agent debate fails to beat single-agent baselines like Chain-of-Thought on the majority of benchmarks, while costing significantly more compute.

- Sycophancy collapses debate into premature consensus. Agents agree with each other instead of reasoning independently, producing lower accuracy than a single agent alone.

- Competition between agents degrades performance rather than sharpening it, leading to debate collapse and collective false memories.

- A single agent with good tools outperforms agent teams in automated patching and reasoning tasks, at a fraction of the cost and latency.

- Multi-agent systems only justify their overhead for genuinely complex, multi-step tasks that require diverse capabilities, which most practical applications do not.

The next time you see a demo of ten agents collaborating to write a report, ask a simple question: does this beat one good agent with a clear prompt? The research says it probably does not.

References

Zhang, H., et al. (2025). Stop Overvaluing Multi-Agent Debate: We Must Rethink Evaluation and Embrace Model Heterogeneity. arXiv:2502.08788.

Zhu, X., et al. (2026). Demystifying Multi-Agent Debate: The Role of Confidence and Diversity. arXiv:2601.19921.

Yao, B., et al. (2025). Peacemaker or Troublemaker: How Sycophancy Shapes Multi-Agent Debate. arXiv:2509.23055.

Choi, H. K., et al. (2026). When Identity Skews Debate: Anonymization for Bias-Reduced Multi-Agent Reasoning. arXiv:2510.07517.

Wu, H., et al. (2025). Can LLM Agents Really Debate? A Controlled Study of Multi-Agent Debate in Logical Reasoning. arXiv:2511.07784.

Ma, X., et al. (2025). The Hunger Game Debate: On the Emergence of Over-Competition in Multi-Agent Systems. arXiv:2509.26126.

Tang, L., et al. (2026). The Value of Variance: Mitigating Debate Collapse in Multi-Agent Systems via Uncertainty-Driven Policy Optimization. arXiv:2602.07186.

Xu, N., et al. (2026). When Agents “Misremember” Collectively: Exploring the Mandela Effect in LLM-based Multi-Agent Systems. ICLR 2026.

Li, X. (2026). When Single-Agent with Skills Replace Multi-Agent Systems and When They Fail. arXiv:2601.04748.

Xu, Q., et al. (2026). A Systematic Study of LLM-Based Architectures for Automated Patching. arXiv:2603.01257.

Zhao, D., et al. (2026). SC-MAS: Constructing Cost-Efficient Multi-Agent Systems with Edge-Level Heterogeneous Collaboration. arXiv:2601.09434.

Tang, B., et al. (2025). On the Importance of Task Complexity in Evaluating LLM-Based Multi-Agent Systems. arXiv:2510.04311.

Disclaimer: For information only. Accuracy or completeness not guaranteed. Illegal use prohibited. Not professional advice or solicitation. Read more: /terms-of-service

Reuse

Citation

@misc{kabui2026,

author = {{Kabui, Charles}},

title = {AI {Agent} {Teams} {Look} {Amazing} but {Rarely} {Work}},

date = {2026-03-11},

url = {https://toknow.ai/posts/ai-agent-teams-multi-agent-hype-impractical/},

langid = {en-GB}

}