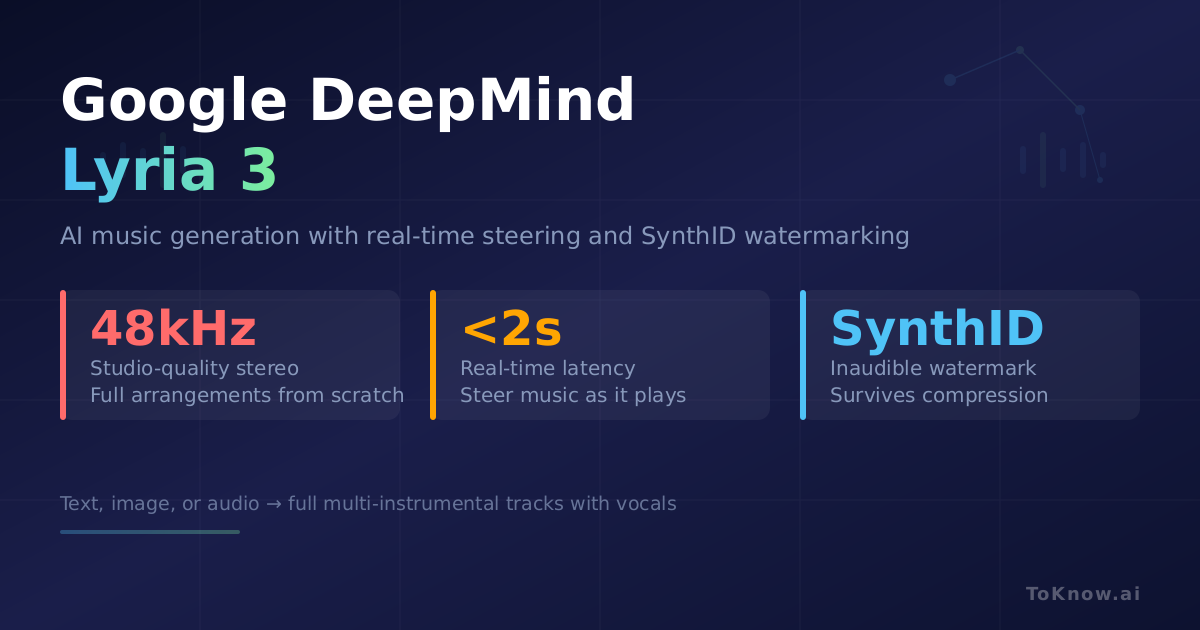

Google DeepMind released Lyria 3, its most advanced music generation model. The model generates 30-second tracks in studio-quality stereo (48kHz) from text, image, or audio inputs. It does not piece together loops. It generates full musical arrangements from scratch, including vocals and multi-instrumental tracks. Lyria 3 is now integrated into the Gemini app. Describe a mood, genre, or set of instruments, and it outputs a finished audio file. Music generation is hard because it is continuous and multi-layered: melody, harmony, rhythm, and timbre all need to stay coherent from the first second to the last.

The real shift is the Lyria RealTime API. Traditional models work like a jukebox: input a prompt, wait for a file. Lyria RealTime generates audio in 2-second chunks as a live stream. Users can steer style, tempo, and instrumentation while the track plays, with under 2 seconds of delay. The Music AI Sandbox builds on this, transforming a hum into a full orchestral arrangement or turning chord progressions into a vocal choir. Every track includes a SynthID watermark embedded in the audio waveform, inaudible to humans but detectable by software even after MP3 compression or analog re-recording. As AI-generated music floods streaming platforms, permanent attribution solves a real trust problem for creators and listeners. Lyria 3 competes directly with Suno and Udio but brings multimodal input and Google’s infrastructure advantage.

Sources:

Disclaimer: For information only. Accuracy or completeness not guaranteed. Illegal use prohibited. Not professional advice or solicitation. Read more: /terms-of-service

Reuse

Citation

@misc{kabui2026,

author = {{Kabui, Charles}},

title = {Google {DeepMind} {Lyria} 3: {Music} {Generation} {Model}

{Outputs} {48kHz} {Stereo} with {Real-Time} {Steering} and {SynthID}

{Watermarking}},

date = {2026-03-03},

url = {https://toknow.ai/posts/google-deepmind-lyria-3-music-generation-synthid-realtime/},

langid = {en-GB}

}