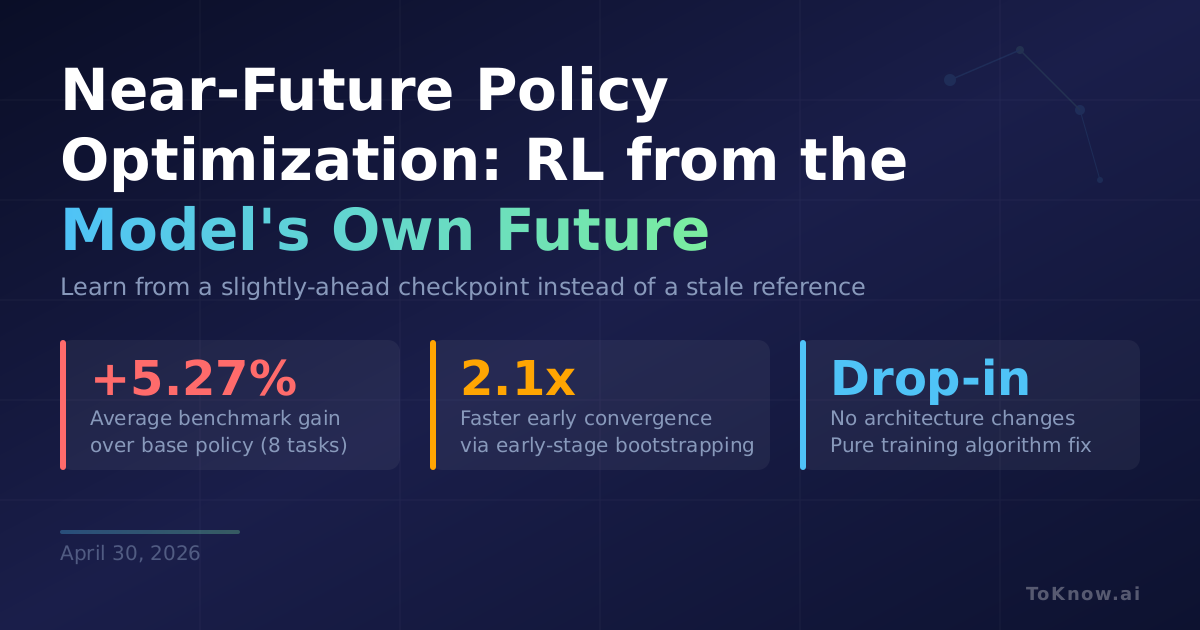

Researchers at CAS/UCAS and JD.COM introduced Near-Future Policy Optimization (NPO), a technique that improves reinforcement learning for language models by replacing the standard fixed reference policy with the model’s own near-future checkpoint. Standard RL methods like GRPO constrain training against the starting checkpoint, which goes stale as the model improves, creating increasingly noisy gradient signals. NPO instead uses a checkpoint from a few steps ahead in the same run: strong enough to solve problems the current model can’t, yet close enough in distribution that training stays stable. The authors formalize this as a quality-variance tradeoff where signal quality (can the source solve new problems?) rises with distance, but gradient variance rises faster. An adaptive variant, AutoNPO, monitors reward stagnation and entropy collapse to automatically trigger interventions and select the optimal lookahead. On Qwen3-VL-8B across eight multimodal reasoning benchmarks, AutoNPO improved average performance from 57.88 to 63.15, a +5.27% gain from a pure algorithm change with no architecture modification.

For teams running RL post-training on reasoning models, NPO is a drop-in replacement that accelerates early convergence by roughly 2.1x and breaks through late-stage plateaus where standard methods stall. Because the future checkpoint is so close to the current policy, importance sampling correction (normally required when mixing trajectories from different sources) can be dropped entirely, saving memory and compute. External teacher methods like LUFFY actually regress on some benchmarks because the distributional gap introduces too much gradient noise.

NPO is part of a “Self-Taught RLVR” research program exploring how models can learn from versions of themselves. The prior paper in the series, RLSD, used a privileged-information self. This one uses the temporal self. The pattern suggests post-training will increasingly replace external supervision with self-generated learning signals.

Sources:

- Near-Future Policy Optimization (arXiv)

- GRPO: DeepSeekMath (arXiv)

- RLSD: Self-Distilled RLVR (arXiv)

- Qwen3-VL Technical Report (arXiv)

Disclaimer: For information only. Accuracy or completeness not guaranteed. Illegal use prohibited. Not professional advice or solicitation. Read more: /terms-of-service

Reuse

Citation

@misc{kabui2026,

author = {{Kabui, Charles}},

title = {Near-Future {Policy} {Optimization:} {RL} {That} {Learns}

from the {Model’s} {Own} {Future} {Self}},

date = {2026-04-30},

url = {https://toknow.ai/posts/npo-near-future-policy-optimization-rl-learns-from-future-self/},

langid = {en-GB}

}